Tesla is working tirelessly to increase its computing power to process the massive amounts of data needed to improve the Autopilot that is offered today, as well as developing the completely autonomous driving proposals that will arrive in the future. In this sense, the company has recently presented its new supercomputer, the fifth most powerful in the world.

Typically, companies don’t talk about the supercomputers that are used internally to process data. Andrej Karpathy, Director of Artificial Intelligence at Tesla, made an exception. At the Conference on Computer Vision and Pattern Recognition (CVPR), he has provided details of the company’s latest supercomputer — the system should achieve 1.8 exaflops at half accuracy (FP16) for artificial intelligence.

While Tesla CEO Elon Musk has already been cackling about the existence of the Dojo project — in the form of an insanely powerful supercomputer — that should be ready by the end of the year. And according to Karpathy, the latest supercomputer is only a pre-Dojo project, and a much more powerful team will be joining soon.

Karpathy mentioned that in terms of FLOPS (floating point operations per second) — a unit that measures the calculations per second that a CPU and GPU can perform — Tesla’s new supercomputer is the fifth most powerful in the world.

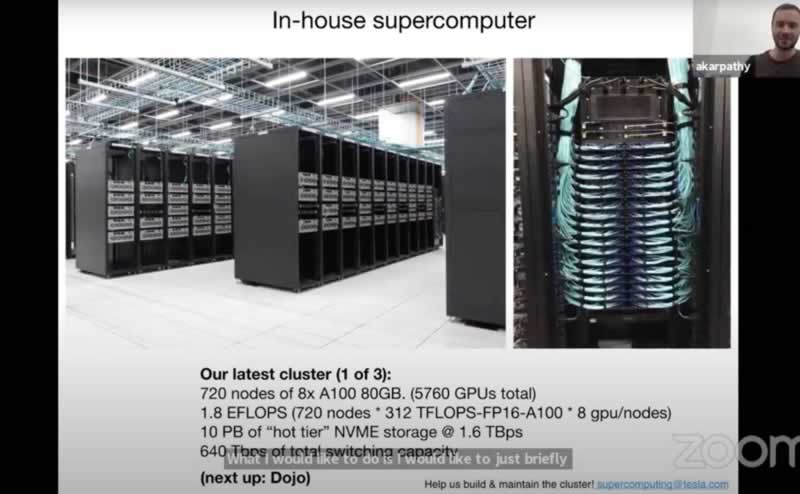

In regards to this supercomputer, the Karpathy has shared a few specs:

- 720 8x A100 80GB nodes. (5,760 GPUs in total).

- 1.8 EFLOPS (720 nodes 312 TFLOPS-FP16-A100 8 gpu / nodes).

- 10 PB of “hot tier” NVME storage.

- 640 Tbps of total switching capacity.

According to Karpathy, Tesla has 1.5 petabyte data set consisting of one million 36 fps videos recorded by eight cameras on a Tesla vehicle. This information ends up in the supercomputer on an NVMe SSD array with a capacity of 10 petabytes and a speed of 1.6 TB/s. The Nvidia accelerators are used to train neural networks based on this data, which is why the fabric should also fail very quickly.

Andrej Karpathy has noted that — “For us, computer vision is the bread and butter of what we do and what enables Autopilot. And for that to work really well, we need to master the data from the fleet, and train massive neural nets and experiment a lot. So we invested a lot into the compute. In this case, we have a cluster that we built with 720 nodes of 8x A100 of the 80GB version. So this is a massive supercomputer.”